Why most pilots stall

Most enterprise AI pilots never deliver sustained business impact. In most cases, the root cause is not model performance - it is operating design.The failure patterns are consistent:- Pilots deployed without full integration into production environments

- Limited alignment between business and technology teams

- No clear feedback loops for continuous improvement

- Success defined by launch rather than scale

Lesson 1: Redesign comes first

The biggest mistake enterprises make is applying AI to broken workflows.In a traditional service model, interactions move sequentially between systems and teams. In an agentic model, AI handles appropriate resolutions autonomously, while human agents focus on complex decisions, exceptions, and relationship-building.This shift demands operating model redesign - not tool deployment - with integration across core customer systems and shared ownership across CX, IT, and operations leaders.

“Before you apply any new technology, you have to decide what to stop doing and then redesign the workflows. Otherwise, you risk automating bad processes.”

SVP, Transformation & OperationsIBM

Lesson 2: Target high-impact workflows first

Most stalled pilots begin in the safest possible place: low-risk, low-impact use cases, like simple FAQs or narrow interactions that demonstrate feasibility but do little to shift performance.The teams scaling fastest start where operational pressure is highest: refund workflows, order inquiries, membership updates, reservation changes, multilingual rollouts. These journeys are often complex, fragmented across systems, and directly tied to cost-to-serve and customer satisfaction.One global retailer focused first on refund and “Where is my order?” inquiries across multiple brands and languages. A multinational mobility provider prioritized reservation changes and cross-border support, where fragmented systems and regional variation had historically slowed resolution. In each case, starting with operationally significant workflows accelerated measurable impact and exposed integration gaps early.Avoiding friction protects pilots. Solving friction unlocks scale.

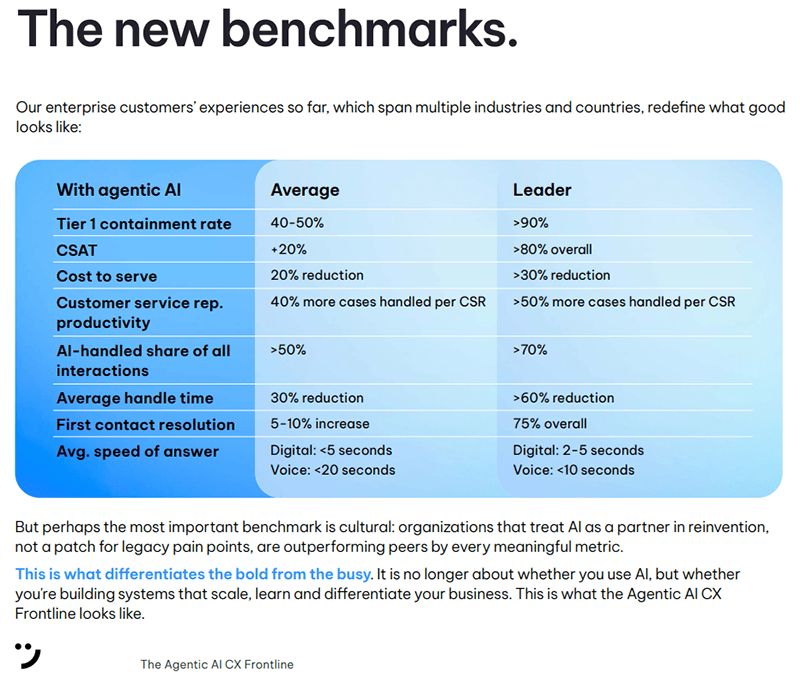

Lesson 3: Legacy KPIs misread performance in an agentic model

Most contact centers are measuring agentic AI performance with the wrong ruler.Average handle time, for example, was built for a labor-driven service model. When AI autonomously resolves interactions, time and staffing are no longer the primary constraints. Applying legacy metrics to an agentic model can distort performance insights and mask real value.Leading organizations are shifting toward outcome-based measurement:- AI-handled share of interactions

- Tier-one containment rate

- Cost per resolution

- First contact resolution

- Customer satisfaction lift

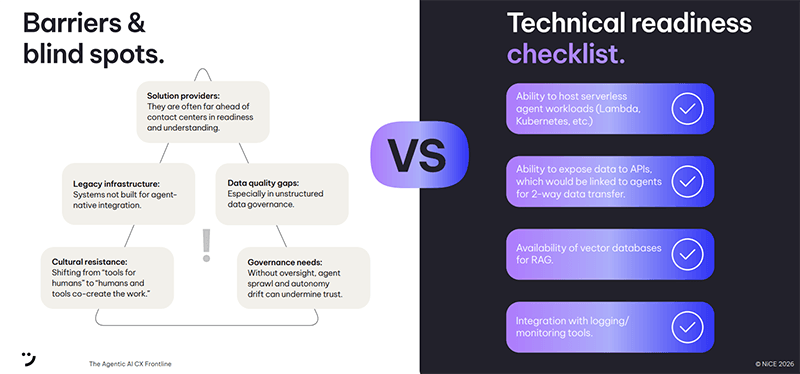

Lesson 4: Structure enables enterprise-scale autonomy

The fastest path to failed AI deployment is autonomy without accountability.Enterprise-scale AI isn't just a technical challenge – it’s a governance challenge. The report identifies four blockers that consistently derail organizations before they reach scale: legacy infrastructure not built for agent-native integration, data quality gaps in unstructured data governance, cultural resistance to AI-human co-creation, and governance gaps that allow agent sprawl and autonomy drift to undermine trust.The teams getting this right aren't relying on LLMs alone. One global fashion retailer put it directly: don't bet the house on prompt-only agents. Enforce policies with deterministic processes, then let LLMs handle natural, contextual conversation within those boundaries. That hybrid approach, structure where it matters, flexibility where it counts, is what makes enterprise-scale autonomy possible.The practical conditions for it to work came through consistently in the report's frontline findings. Successful teams brought Ops, CX, Tech and Executive functions around a shared strategic vision early. They used robust analytics to navigate hype cycles and stay focused on what the data actually showed. And they built for flexibility: knowing that in a fast-moving environment, agility matters as much as ambition.The governance question isn't separate from this. It's what makes the hybrid model hold. Leading teams embed monitoring, observability and escalation models from the outset, not after incidents. AI decisions are logged. Performance is measured at the journey level. Exceptions trigger defined human intervention. Quality assurance is designed in from day one, not added later as a compliance layer.That combination - practitioner-led structure, cross-functional alignment, governance by design - is what separates deployments that scale from pilots that stall.In the full report, we outline the governance patterns emerging among early adopters, including the readiness checks, oversight structures, and accountability models that distinguish enterprise-scale deployments from experimentation.

Lesson 5: Treat trust as a system requirement

Technical capability alone does not create enterprise-scale AI. The sharpest blocker isn't the model - it's trust.Early data points to a significant gap between employees and leadership on AI. Workers see pilots that look like job cuts in disguise, minimal training, and thin explanations of how agents change roles or metrics. According to Stanford’s Digital Economy Lab, early-career workers in AI-exposed roles like customer service faced a 13% drop in employment compared to peers in less-exposed fields - and disruption hits hardest where AI is deployed as an outright replacement rather than augmentation. Meanwhile, nearly half of employees report no trust in their employer to implement AI in ways that benefit them. Left unaddressed, that distrust translates into resistance, workarounds, and stalled adoption.The organizations moving beyond pilot mode treat trust as a design decision, not a communications exercise. They clarify early which decisions AI can make autonomously, where human judgment remains essential, and how accountability is structured when AI takes action. Many start with augmentation - AI supporting frontline agents first, handling repetitive tasks and surfacing contextual insights - and expand autonomy as confidence and performance increase.Role redesign and upskilling are equally critical. Scaling teams invest in preparing agents for judgment-first work rather than task execution. Put frontline staff at the table. Publish role redesign plans. Fund real upskilling.Where this discipline is absent, adoption slows. Resistance grows. Pilots stall. Where it is present, agentic AI becomes a frontline operating advantage rather than a source of disruption.